By Sandeep Joshi, Founder & Managing Director, Massivue

Most enterprise AI training programs are built on a flawed assumption: that one curriculum can serve every department. After 3 years of building AI adoption programs across 40+ APAC enterprises, we have learned this assumption is the single biggest reason AI initiatives plateau at 15–20% utilization. Finance does not use AI the way marketing does. HR faces risks engineering never touches. Manufacturing needs workflows that only make sense on a factory floor. This article breaks down why generic AI training fails and what works instead, department by department.

The Problem With “AI for Everyone” Programs

The typical enterprise AI rollout follows a predictable pattern.

A CHRO or CIO signs a contract with a training vendor. The vendor provides a generic curriculum: “AI for Everyone,” “Generative AI Fundamentals,” “Microsoft Copilot 101.” The L&D team runs completion campaigns across 2,000, 5,000, or 10,000 employees. Dashboards light up with completion rates of 85% or more. Leadership declares the rollout a success.

Ninety days later, someone pulls the actual utilization data. Across our client base, the number is consistently between 15% and 20%.

This is not a failure of effort. It is a failure of design.

The reason generic training has been the default is structural. One vendor is easier to procure than six. One curriculum is easier for L&D to manage than six. Vendor economics reward volume, not specificity. Every incentive in the traditional enterprise training model points toward “one program for everyone.”

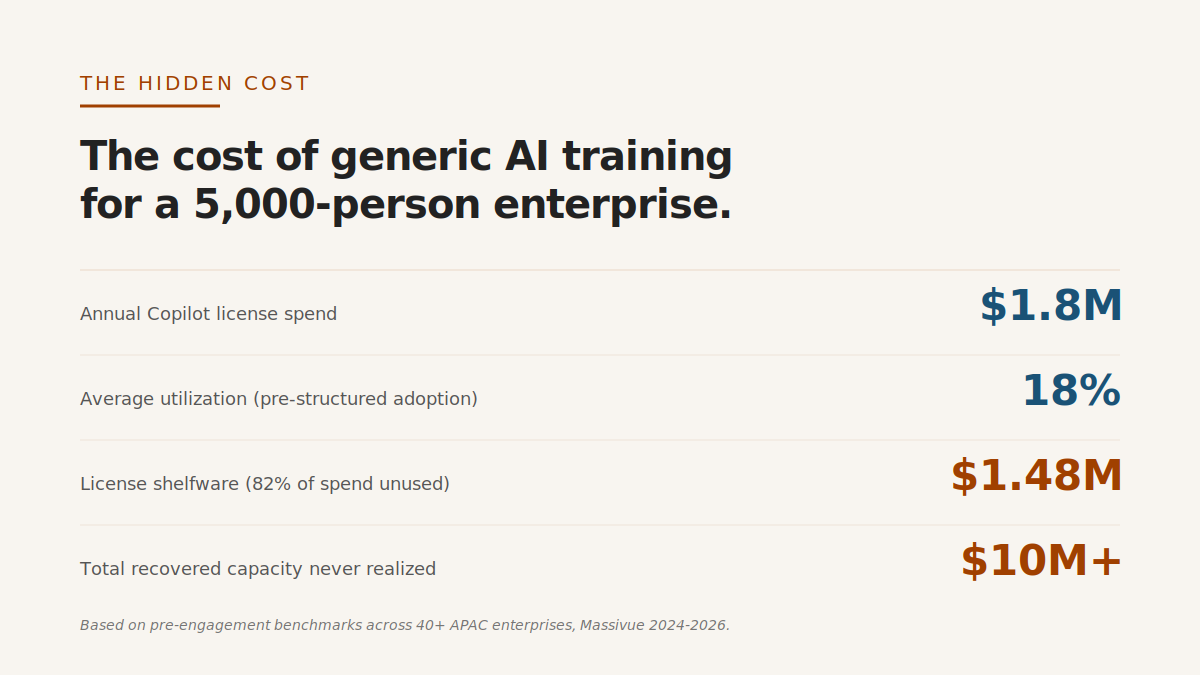

The hidden cost is enormous.

For a 5,000-person organization at $30 per Copilot license per month, that is $1.8 million in annual license spend. At 18% utilization, 82% of that spend is shelfware. Add the training program cost, the internal L&D time, and the opportunity cost of productivity that never materialized, and the true cost of a generic AI training rollout often exceeds $10 million in recovered capacity that never shows up on the P&L.

The fix is not more money. The fix is different design.

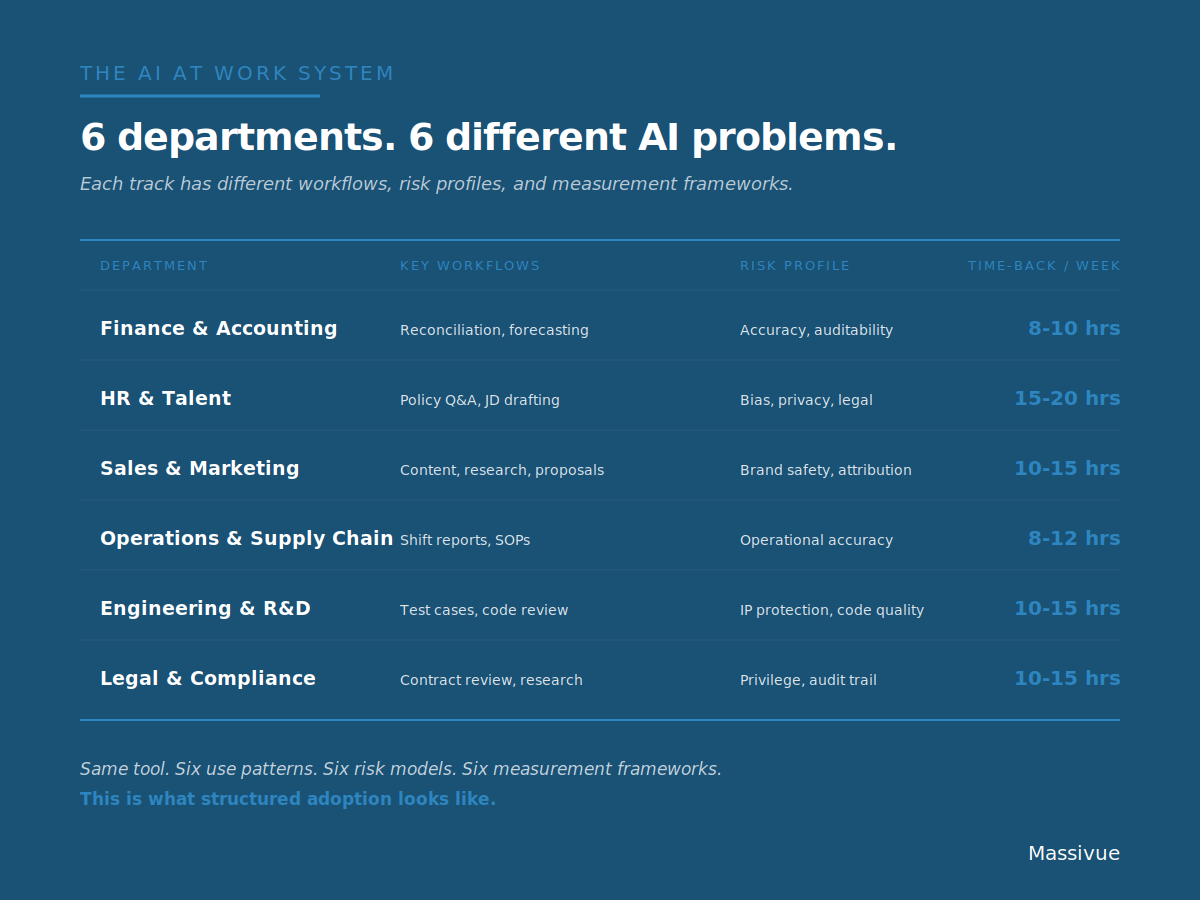

Six Departments. Six Different AI Problems.

To understand why generic training fails, start with a simple observation: departments do not do the same kind of work.

Let us walk through six of them.

Finance and Accounting

Finance teams spend their weeks inside variance analysis, reconciliations, forecasts, and board reporting. Their AI use cases live inside Excel, inside data rooms, inside compliance-driven workflows where every number needs a source.

The dominant risk profile for finance AI is accuracy and auditability. Brand safety is not a finance concern. Tone is not a finance concern. But a number that cannot be traced back to its source is a compliance nightmare.

Effective measurement for finance AI looks like:

- Close cycle time (days from period end to report issuance)

- Variance analysis completion rate

- Audit trail coverage for AI-assisted outputs

- Accuracy benchmarks versus human-only workflows

The time-back potential is significant. A senior financial analyst who moves from manual reconciliation to AI-assisted reconciliation typically recovers 8 to 10 hours per week, assuming the AI is trained on the specific templates and data structures the team already uses.

Generic AI training for finance fails because it treats the analyst as a generic knowledge worker. Finance does not need email drafting tips. It needs prompt patterns for Excel formulas, reconciliation templates, and forecast narratives.

HR and Talent

HR faces a completely different risk landscape.

The primary AI use cases inside HR include job description drafting, interview question generation, policy Q&A, onboarding content, and performance review support. These workflows live inside personal data, legal frameworks, and high-sensitivity employee communications.

The dominant risk profile for HR AI is bias, privacy, and legal exposure. A single AI-generated performance review that references protected class information can become a lawsuit. An AI-assisted job description that inadvertently uses biased language can produce an adverse impact claim.

Effective measurement for HR AI includes:

- Productivity per HR business partner (hours saved on drafting and review)

- Compliance artifact generation (clean audit trails on AI-assisted decisions)

- Bias incident rate (escalations tied to AI-generated content)

- Policy Q&A accuracy (correctness of AI responses to employee questions)

Time-back potential for an HR business partner using properly configured AI is between 15 and 20 hours per week, which makes HR one of the highest-ROI AI adoption functions in the enterprise.

Generic AI training for HR fails because it ignores the legal dimension. An HR team needs to know not just how to prompt Copilot, but which prompts produce content that can be used in a formal employee document and which cannot.

Sales and Marketing

Sales and marketing teams work with content, audience research, campaign analysis, creative iteration, and proposal generation. Their AI use cases live inside brand voice, customer data, and conversion funnels.

The risk profile is brand safety, attribution, and customer data handling. A marketing team that uses AI to generate content must ensure that content passes the brand’s tone and compliance bar. A sales team using AI on customer data must handle that data inside governance boundaries.

Effective measurement for sales and marketing AI includes:

- Campaign velocity (time from brief to launch)

- Content volume (pieces produced per cycle)

- Quality scores (acceptance rate of AI-drafted content)

- Pipeline contribution attributable to AI-assisted outbound

Time-back potential is 10 to 15 hours per team member per week, concentrated in the content drafting, audience research, and proposal generation functions.

Generic AI training for marketing fails because it does not teach brand voice alignment. Without that, AI-generated content looks generic. Without generic content being blocked, the brand’s voice erodes over quarters.

Operations and Supply Chain

Operations and supply chain teams work with shift handovers, SOP updates, incident reporting, supplier communications, demand forecasting, and inventory analysis. Their AI use cases often live on a factory floor, in a warehouse, or in a logistics control room. Not at a desk.

The risk profile is operational accuracy and compliance documentation. An incorrect SOP reference can cause a safety incident. An inaccurate shift handover note can cause a production error.

Effective measurement for operations AI includes:

- Cycle time reduction (time to complete operational reports)

- Error rates (corrections needed on AI-generated operational content)

- Documentation coverage (percentage of shifts with complete handover notes)

- Multilingual accuracy (for multilingual workforces)

Time-back potential is 8 to 12 hours per supervisor per week, heavily concentrated in documentation and reporting workflows.

Generic AI training for operations fails for reasons that become obvious the moment you visit a factory floor. The training was built for someone sitting at a desk drafting an email. The operations supervisor is standing at a line, reviewing a SOP on a phone, in a language other than English. None of the standard training addresses that reality.

Engineering and R&D

Engineering and R&D teams use AI for test case generation, code review, documentation, technical research, and prototype iteration.

The risk profile is intellectual property protection and code quality. An AI tool that sends source code to a public model creates immediate IP exposure. An AI that produces plausible-looking but subtly incorrect code creates defect debt that accumulates silently for months.

Effective measurement for engineering AI includes:

- Story completion velocity (features shipped per sprint)

- Defect rates (bugs per feature)

- Documentation coverage (percentage of code with AI-assisted documentation)

- Test coverage (percentage of functions with AI-generated tests)

Time-back potential is 10 to 15 hours per engineer per week, assuming the AI is configured with appropriate IP boundaries: private models, internal repositories, sanctioned code review tools.

Generic AI training for engineering fails because it usually ignores the private-model requirement. A training program that demos AI on public ChatGPT teaches engineers to use public tools for private IP. That is not an acceptable enterprise posture.

Legal and Compliance

Legal and compliance teams work with contract review, clause comparison, regulatory research, policy drafting, and matter management. Their AI use cases live inside privileged communications and regulated workflows.

The risk profile is privilege protection, accuracy, and complete audit trails. A privileged document passed through a public AI model can break privilege. An AI-summarized regulatory obligation that is subtly wrong can produce real compliance exposure.

Effective measurement for legal AI includes:

- Contract cycle time (time from draft to signature)

- Review thoroughness (clause-level coverage on AI-assisted review)

- Escalation rate (matters requiring senior counsel after AI-assisted first pass)

- Audit trail completeness

Time-back potential is 10 to 15 hours per counsel per week, primarily in contract review and regulatory research.

Generic AI training for legal fails because it does not address privilege or jurisdictional specificity. A legal team needs training on which AI tools preserve privilege, which do not, and how the answers differ across Singapore, India, UAE, and US law.

The Training Architecture That Works

Six departments. Six different risk profiles, workflows, measurements, and governance contexts.

Training them together is like teaching a surgeon and a plumber with the same curriculum because both “use tools.”

The training architecture that actually works has five components.

First, department-specific learning pathways, not department-specific badges. A badge with the finance logo on a generic curriculum is not department-specific. True specificity means different content, different prompts, different case studies, and different measurement for each track.

Second, role-specific prompt libraries. An HR business partner needs a prompt library that reflects HR workflows. A finance analyst needs a prompt library that reflects finance workflows. Generic prompt guides (“how to write a good prompt”) are inadequate.

Third, cohort-based delivery with department leads embedded. AI adoption is a social behavior. It requires group norms, peer support, and visible department leadership. Self-paced individual learning produces completions, not adoption.

Fourth, measurement frameworks tailored to each department’s KPIs. Finance measures close cycle time. Marketing measures campaign velocity. Operations measures cycle time and error rates. A single “utilization percentage” dashboard is inadequate.

Fifth, continuous updates as tools and regulations evolve. Microsoft Copilot in Q1 2026 is meaningfully different from Copilot in Q4 2025. Singapore’s AI Verify framework differs from India’s regulatory context. A training program that is static is a training program that is decaying.

The Measurement Framework

Enterprise AI programs that achieve 70% plus utilization share a common measurement approach. Five metrics matter more than any others.

Weekly Active Utilization (WAU%). The percentage of licensed users who actively use the AI tool in a given week. This is the most important single metric in enterprise AI. If you remember only one number, make it this.

Power User Conversion Rate. The percentage of users who sustain 3 or more workflow-level uses per week, not just single prompt attempts. Power users generate most of the ROI.

Workflow Automation Count. The number of specific workflows that have been redesigned with AI embedded, measured on a department-by-department basis. This shifts the conversation from tool usage to process improvement.

Time-Back per Active User. The verified hours saved per active user per week, measured through time-tracked tasks, not self-reported surveys. Self-reported numbers are usually inflated by a factor of two.

Output Quality Baseline. The review cycle length, rejection rate, and human intervention rate on AI-generated outputs. Rising quality scores indicate rising capability, not just rising usage.

These five metrics, measured consistently and reported monthly, produce the feedback loops that drive real adoption.

What This Looks Like in Practice

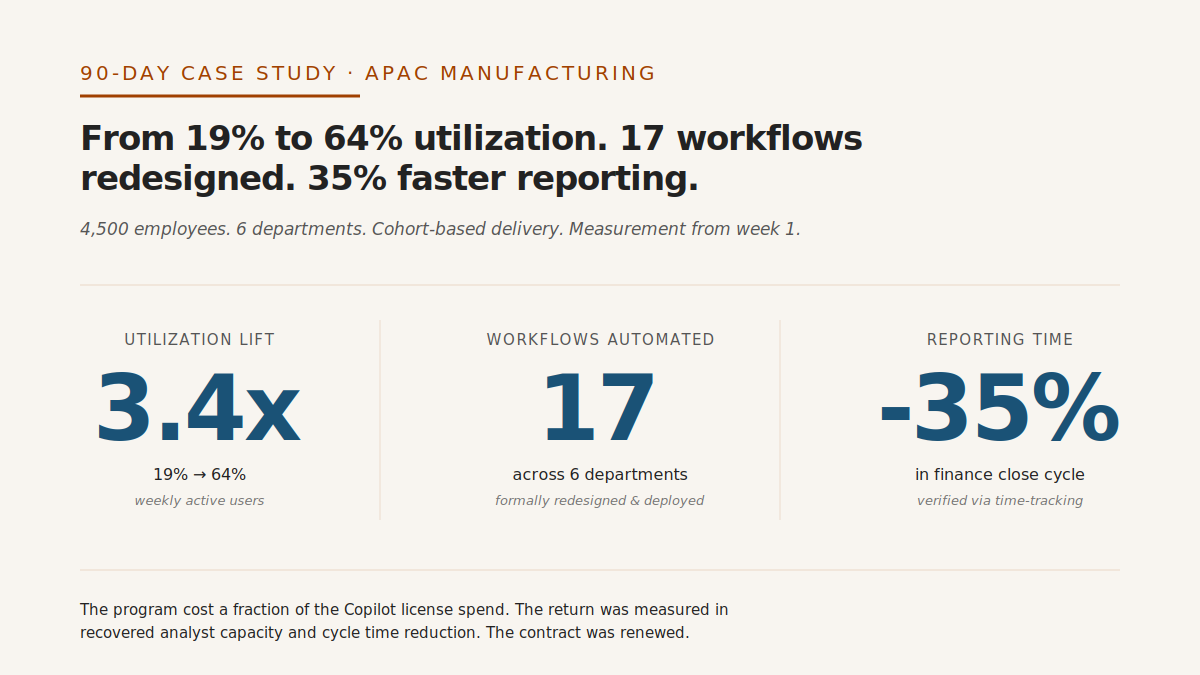

A recent engagement illustrates the pattern.

A large APAC manufacturing client deployed Microsoft Copilot to approximately 4,500 employees across six functions. After six months, utilization had settled at 19%. Leadership considered cancelling the Copilot contract.

We implemented a 90-day structured adoption program across six departments.

In weeks 1 through 2, we ran workflow mapping sessions with department leads. Each department identified the 5 most frequently executed workflows in their function. These became the anchor use cases for the training.

In weeks 3 through 6, we delivered cohort-based sessions to each department. Finance focused on reconciliation, variance analysis, and close narratives. HR focused on policy Q&A, job description drafting, and performance review support. Manufacturing focused on shift handover notes, SOP references, and incident reporting. Each cohort had its own department lead embedded as a co-facilitator.

In weeks 7 through 12, we shifted to measurement and iteration. Each department reported weekly active utilization, power user conversion, and workflow automation count. Teams whose numbers were below target received targeted coaching on specific workflow barriers.

By day 90, the results were clear.

Weekly active utilization moved from 19% to 64% across the six departments. Reporting time in finance reduced by 35%. Seventeen workflows were formally redesigned and deployed with AI embedded. The Copilot contract was renewed.

The program cost a fraction of the Copilot license spend. The return was measured in recovered analyst capacity and cycle time reduction.

The Build vs Buy Question for Department-Specific AI

A reasonable question at this point: should you build this internally, or buy structured adoption infrastructure?

Build makes sense in a narrow set of cases. If you have an in-house L&D team with deep AI expertise, department-specific enterprise architecture knowledge, and multi-year capacity, you can build this internally. Most enterprises do not.

Buy makes sense for the rest. The infrastructure (prompt libraries, measurement frameworks, department curricula, cohort facilitation materials) takes 18 to 36 months to build correctly. By the time an internal program is mature, the tools have changed.

When evaluating any AI training vendor, ask three questions.

First: is the content role-specific? Ask for the syllabus broken out by department. If marketing, finance, and manufacturing are in the same track, the content is generic.

Second: are productivity outcomes measured? Ask for the last three client measurement reports. If the vendor cannot show utilization curves, workflow automation counts, and time-back data, they are not measuring.

Third: is it enterprise-safe? Ask what happens to the data employees put into the AI during training. If the answer involves external LLMs or public models, stop the conversation.

Most vendors fail at least one of these questions. Some fail all three.

Where to Start

The question is not whether department-specific AI adoption is better. The question is which department in your organization needs it first.

The answer is usually the department with the largest gap between AI tool deployment and AI tool utilization. If finance has Copilot licenses but 12% utilization, that is probably your starting point. If HR has Copilot licenses but the team is asking whether it is safe to use, that is your starting point.

To find your answer, we have built a free AI Readiness Assessment that benchmarks your organization’s adoption posture across all 6 departments. It takes under 10 minutes. The output gives you a department-by-department view of where structured adoption would produce the highest return.

The gap between generic and department-specific is not incremental. It is the difference between 18% and 70% utilization. It is the difference between shelfware and real capability.

Start with one department. Measure honestly. Expand from there.

Ready to move your enterprise from 18% to 70% AI utilization?

Take the free AI Readiness Assessment and get a department-by-department view of where structured adoption will deliver the highest return. Under 10 minutes. No sales call required.

Sandeep Joshi is the Founder and Managing Director of Massivue, an enterprise AI adoption firm building structured learning infrastructure for APAC enterprises. Massivue has built AI adoption programs across Singapore, India, Malaysia, UAE, Australia, Indonesia, and the Philippines. Connect with Sandeep on LinkedIn to continue the conversation.